“Computers enable fantasies” – On the continued relevance of Weizenbaum’s warnings

“The computer has long been a solution looking for problems—the ultimate technological fix which insulates us from having to look at problems.” – Joseph Weizenbaum (1983)

Trying to keep up with all of the news surrounding current happenings in the world of computers is overwhelming, to put it mildly. Even more so if you are trying to separate the hype and hope from the more banal realities. And, in this moment, this is made still more complicated by the way that many of the places you might turn to for information seem to themselves be awash in things like AI generated images and screenshots of text responses from ChatGPT. And though there is nothing particularly new about conversations and questions regarding the implications of this or that new program or platform—the present moment seems to be another period in which such discussions are difficult to ignore.

And though these discussions are not always put explicitly in the terms of “what will this mean for us?” that seems to be the question percolating just under the surface in much of the moment’s discourse. Or, to put it slightly differently:

“On the one hand the computer makes it possible in principle to live in a world of plenty for everyone, on the other hand we are well on our way to using it to create a world of suffering and chaos. Paradoxical, no?”

The above quotation comes from a lecture the computer scientist and social critic Joseph Weizenbaum delivered in 1983, titled “The paradoxical role of the computer.” And though this is a clash that we still find ourselves wrestling with today, it can be useful to take a step back and consider how it could have been foreseen some forty years ago. While 1983 is certainly not ancient history, when it comes to the history of computing, forty years can certainly seem like a time when dinosaurs still roamed the earth. After all, 1983 was pre-smartphone, prior to the genuine takeoff of the personal computer, it was before the web and therefore also before Web 2.0 and Web3—heck, quite a few of the figures who dominate contemporary discussions around computer technology hadn’t even been born yet (or were still children). 1983 was a long time ago for computers, yet for some figures who were paying attention, figures like Weizenbaum, it was already possible to see the direction that the eager embrace of computers was putting societies on—and though such figures spoke out in hopes that the direction would be changed, it is likely that many of them would not be too surprised with the messes we find ourselves in at present.

When it comes to the current debates around things like AI art, self-driving cars, and ChatGPT—there is no substitute for keeping an eye on those debates themselves. Whether these are playing out in articles and op-eds or podcasts and social media threads, these are discussions unfolding in real time commenting on technologies that are themselves in flux. You should take the time to go listen to this essential interview of Timnit Gebru on Paris Marx’s Tech Won’t Save Us podcast, read Abeba Birhane and Deborah Raji’s vital article “ChatGPT, Galactica, and the Progress Trap” over at Wired, and be sure to check out Meredith Broussard’s Artificial Unintelligence, Safiya Noble’s Algorithms of Oppression, and Sarah Roberts’s Behind the Screens (all of which should be required reading).

If you want to understand the current debates, you [gasp] need to actually pay attention to the current debates. Nevertheless, it is always worthwhile to glance in the rearview mirror if you want to make sense of how it is that we got here. While Weizenbaum earned himself a place in the history of computing, the history of AI, and the history of technological criticism, he deserves more than a footnote or a passing reference. And this is because many of the issues that Weizenbaum identified in the days when computing and AI were still very much in their infancy are the very issues we are still debating today. Sure, the names of the specific programs and their advocates have changed, but the core questions of “what is this, and what does this mean for us?” remain largely the same. Make no mistake, some of Weizenbaum’s predictions were wrong, some of his language is dated, and the milieu of social critics to which Weizenbaum belonged is behind us. Yet engaging with past critics of technology is a reminder that many decades ago there were some who saw the direction we were heading in—their work is a reminder that technology does not drive history, people drive history, though oftentimes those people driving history have started to worship technology.

Writing and thinking at a point in time when the computer was ascendant but where it had not yet become inextricably bound up in daily life, Weizenbaum does not treat the computer (or AI) as a fait accompli. Weizenbaum’s thought remains worth considering not because he was a forerunner of contemporary criticism, and not because of his technical qualifications, but because even if his critiques do not perfectly explain the current branches of the computing debates they still get us back to the tangled roots.

It Isn’t Magic, It’s a Magic Trick

In 1966, commenting on heuristic programming and artificial intelligence, Weizenbaum observed how “in those realms machines are made to behave in wondrous ways, often sufficient to dazzle even the most experienced observer.” These lines can be found in the opening paragraph of the article with which much of Weizenbaum’s reputation is still bound up, the article from the Communications of the ACM in which Weizenbaum describes “ELIZA—A Computer Program For the Study of Natural Language Communication Between Man and Machine.” As Weizenbaum would explain in the article, ELIZA was “a program which makes natural language conversation with a computer possible.” Granted, this was not a free-flowing discussion that could range across any possible topic, but a structured conversation in which the program and the human participant both played well defined parts. Specifically, the program took on the role of a Rogerian psychotherapist with the human interlocutor taking on the role of the patient. Interacting with ELIZA by way of typing, the human participant would offer a series of statements and the program would respond by transforming these statements into questions that would continue the conversation. ELIZA, named for the character from Pygmalion, responded to the user by rearranging the words given by the user themselves and presenting them as a question—it followed a script that could turn “I am BLAH” into “How long have you been BLAH.”

Based on the way that ELIZA responded to the messages it received, the program made it seem as though it understood what had been said to it. Of course, as Weizenbaum noted, this was really just an “illusion,” albeit one to which the human interlocutor contributed by believing that in order for ELIZA to respond it must have truly understood them—while in reality the program was simply following a script that dictated how it would take, transform, and then offer the user’s words back to them. This tendency for people to impute actual intelligence to a system that they perceive to be intelligent, is what has come to be known as the “ELIZA effect.” Yet, for Weizenbaum, the essential thing was that ELIZA was not intelligent, it did not (it could not) actually understand what people were saying to it, but as long as the person was willing to keep playing along they projected onto the program the “illusion” of understanding.

Weizenbaum was well aware of the ways that programs like ELIZA could “behave in wondrous ways” and even “dazzle” yet he had also initially believed that “once a particular program is unmasked, once its inner workings are explained…its magic crumbles away; it stands revealed as a mere collection of procedures, each quite comprehensible.” His thinking was that these programs did not so much offer real magic as a sort of magic trick—at first it is quite impressive when a magician pulls a rabbit out of a hat or makes their assistant disappear, but once the trick is explained what was once uncanny becomes at best an impressive feat of technical skill and misdirection. Weizenbaum, especially at first, believed that those who were unfamiliar with the inner working of computers were more likely to get swept away by the illusion, but he also thought that once the processes were made sufficiently clear anyone would be able to make sense of what was really taking place.

Granted, what largely transformed Weizenbaum into an outspoken critic of AI and computers was his revelation that even once the processes were explained many people still bought into the “illusion.” And what’s more that even many people who understood the inner workings of computers quite well could still get swept away as well. Weizenbaum observed that ELIZA demonstrated “if nothing else, how easy it is to create and maintain the illusion of understanding, hence perhaps of judgement deserving of credibility” an observation he followed up by noting “A certain danger lurks there.”

These “illusions” are alive and well today, as new developments in AI and computing are praised precisely for being “wondrous” and for their ability to “dazzle.” Yet Weizenbaum reminds us that there are technical processes and systems at work. Sure, they are significantly more complex than ELIZA ever was, but we should not allow ourselves to be so overwhelmed by the magic that we lose interest in how the trick is actually being pulled off.

Which brings us to the next point.

We Allow Ourselves to Be Fooled

Roughly four years before Weizenbaum’s article about ELIZA appeared in the Communications of the ACM he published a perhaps even more significant, if often overlooked, article in the pages of Datamation. An article with the noteworthy title “How to Make a Computer Appear Intelligent.” At the core of this article was not ELIZA, or some other sort of program with which a person communicated using “natural language,” but instead a program that played the game of Go-MOKU/Five-in-a-row. While the program itself may not really be the most interesting thing in the world (it seems to have been capable of playing a very good game of Go-MOKU), Weizenbaum’s comments about the program are what is interesting. In many ways, Weizenbaum’s observations here are ones that he would echo later in his various writings about ELIZA, yet one significant difference is that in this 1962 article Weizenbaum placed greater focus not on the experience of the user interacting with the program, but on the person responsible for creating the program.

As Weizenbaum put it “the author of an ‘artificially intelligent’ program” is “clearly setting out to fool some observer for some time.” And thus, at least to a certain extent, the degree to which a program could be counted as a “success” would be determined “by the percentage of the exposed observers who have been fooled multiplied by the length of time they have failed to catch on.” In many ways this is quite similar to the comments Weizenbaum would later make about ELIZA, and in talking about his Go-MOKU program, Weizenbaum specifically noted that the program’s algorithm was able “to create and maintain a wonderful illusion of spontaneity.” Yet, it is noteworthy that in this article Weizenbaum is responding to the question implied in his title of “how to make a computer appear intelligent” by putting a heavy emphasis on the word “appear” and suggesting that the way to achieve this is to “fool” the people interacting with it, and to try to

keep them from catching on. Significantly, even in 1962, Weizenbaum was already noting that when it came to the matter of fooling people, it was also quite possible for the program’s author to wind up fooling themselves, as he put it “programs which become so complex…that the author himself loses track, obviously have the highest IQ’s.” In other words, understanding the program did not guarantee protection, for it was quite possible that the complexity of a program would make it so that those involved in it would not feel that they really understood it any longer. Taken together, these comments that appeared in Datamation and the articles on ELIZA, highlight the illusion spinning power of many programs, while noting that in many cases the illusion is not incidental but the entire point.

Regardless of whether or not you had previously heard of Joseph Weizenbaum, it is fairly likely (forgive the assumption) that you have heard the old adage “if it seems too good to be true, it is.” Unfortunately, this is something that seems to be consistently forgotten when it comes to new technologies that are often greeted with uncritical enthusiasm. Yet, it quite often happens that after a bit of time passes, it becomes clear that the technology isn’t really quite as transformative as its advocates claimed it would be, or—even more significantly—the early stories weren’t telling the whole story. Case in point, we can consider some recent revelations about some of the technologies about which people have been most excited. Self-driving cars have been a high-tech fantasy for quite some time, and one of the main selling points for Tesla automobiles…yet it seems that a video (admittedly from a few years ago) touting the autopilot capabilities of the vehicles was “staged.” On a similar note, albeit about a different technology, a recent exposé has revealed how “OpenAI Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic.” There is much in these stories of great significance that raises serious ethical questions—but another element of these pieces is that they reveal how a part of maintaining the “wondrous” and “dazzling” illusion of these technologies involves deliberately fooling people, or at the very least covering up relevant information. Whether that is staging a demonstration, or hiding the exploited workers performing essential labor, these parts of the stories are hidden. And once they are revealed, there will certainly still be some who cling to the “illusion” (refusing to believe that they have been duped), but it may also signal the point at which it becomes harder to fool people.

The case of Tesla’s staged demonstration and ChatGPT’s exploitation of workers in Kenya are just two examples. And they are hardly the first instances where it has turned out that high-tech magic was really just a lot of smoke and mirrors. The surprising thing about these revelations is not the revelations themselves, but that we still find it so surprising when they come out. In 1962, Weizenbaum had argued that the “success” of an “artificially intelligent” program hinged largely on its ability to fool people. And if a program could do a suitably passable job of fooling people at first, many of those people would then project their own fantasies onto the program, deepening their own commitment to the fantasy.

This should be a reminder to approach excitedly hyped new technologies with some healthy skepticism. It is worth asking “what am I not seeing?” and “am I being fooled?”

And if you are being fooled, it is worth considering who it is that is trying to fool you…

Don’t Focus on the Machine, Focus on the People Behind the Machine

When writing of those who were taken in by the various illusions conjured up by computing technologies, Weizenbaum was generally quite sympathetic towards those who were not technical specialists but were dazzled by what they saw. However, Weizenbaum rarely constrained the ire he felt towards his peers in the computing field who he held responsible for spinning those illusions—even as he increasingly came to the conclusion that many of them had fallen under their own spell.

Though Weizenbaum frequently inveighed against those he dubbed the “artificial intelligentsia” in his talks and in his writing, a moment where his frustration with others in the computing field was particularly on display was in a lengthy review written for The New York Review of Books in 1983 under the title “The Computer in Your Future.” There, Weizenbaum was reviewing Edward Feigenbaum and Pamela McCorduck’s book The Fifth Generation: Artificial Intelligence and Japan’s Computer Challenge to the World—it was not a particularly kind review. While this is not the time or place to offer a full summary of The Fifth Generation, in the book Feigenbaum and McCorduck made several exciting claims about the (then current) status of computing amidst ambitious forecasts of what to expect from the “fifth generation” computers that they claimed would be appearing soon. While, arguably, one of the reasons you probably don’t hear much talk of The Fifth Generation today is that many of its prophecies failed to come true, at the time of his review the book’s claims were shrouded in feasibility especially as Feigenbaum and McCorduck were figures with esteemed reputations in the computing world. And yet, in response to the claims of the book, Weizenbaum put forth the following question in regards to “the computer enthusiasts” namely “What have they boasted of before and how were such boasts justified in reality?” With Weizenbaum adding that many of Feigenbaum and McCorduck’s prophecies were pretty much identical to ones that “computer enthusiasts” had been making decade after decade.

Though Weizenbaum certainly presented many direct quibbles with the predictions made in the book, the review made clear that his primary frustration was not with the veracity of the claims, but with the overall vision of society being sketched out in the book. It was a vision of “a world in which it will hardly be necessary for people to meet one another directly” in which every problem would be inevitably and neatly solved by the introduction of computers. Writing of the enthusiastic embrace of a fully computerized world, Weizenbaum grumbled, “These people see the technical apparatus underlying Orwell’s 1984 and, like children on seeing the beach, they run for it” a point to which Weizenbaum added “I wish it were their private excursion, but they demand that we all come along.” Weizenbaum’s biting review earned a dismissively sharp response from Feigenbaum and McCorduck that was summed up in their response’s first line “There you go again” (really, that was their reply’s first sentence), to which Weizenbaum in turn responded with a further withering denouncement of the book (and the fact that the authors had responded to his critiques primarily by insulting him). The rift the review opened up was never really healed, and the sparring was but one of the more particularly visible clashes that opened up between the increasingly critical Weizenbaum and many of his colleagues. For at base what Weizenbaum was so denouncing in his review was not this or that specific claim, but the entire worldview undergirding the book—an inevitability-tinged adoration for computing technologies that was heavy on the hopes and relatively unconcerned with the consequences.

Weizenbaum’s review of The Fifth Generation, encapsulates several key components of Weizenbaum’s overall critical stance towards computing. These included: a skepticism towards the promises being made by “computer enthusiasts” about what computers could do (or were about to be able to do), a rejection of the idea that a certain version of the computerized future was inevitable (and being driven by the computer itself), and a call for a real sense of responsibility. Weizenbaum rejected the idea that computers were an autonomous force, he was keenly aware that what was driving computers were people and institutions, and the particular values of those people and institutions. Granted, Weizenbaum did have a concern that for many of these people the computer was not simply a means to an end but had become an end in and of itself. In an interview that appeared in the book Speaking Minds, Weizenbaum described how—despite the technical and scientific veneer—when it came to the pronouncements of the “computer enthusiasts” as he put it “the phenomenon we are seeing is fundamentally ideological…There is a very strong theological or ideological component.” Weizenbaum was hardly the only twentieth-century critic of technology to observe that technology (and computers more specifically) had become a sort of religion, with the computer representing a new sort of sun god (Mumford) and with technology becoming “the God that saves” (Ellul)—yet Weizenbaum’s place within the computing world allowed him to make this observation as an insider.

And in arguing with his colleagues in computing, the issue that Weizenbaum kept butting up against was the way in which an ideological and theological faith in technological inevitability seemed to insulate people from the recognition that they (a human being!) were making decisions. As Weizenbaum stated in his book Computer Power and Human Reason:

“The myth of technological and political and social inevitability is a powerful tranquilizer of the conscience. Its service is to remove responsibility from the shoulders of everyone who truly believes in it. But, in fact, there are actors!”

Weizenbaum’s comment can, and should, certainly be read as a demand that those working in the technological sphere accept greater responsibility for what they were doing. However, the above quotation can also be interpreted as a reminder to those outside of the technological sphere to look for the humans involved in actually making the decisions. Who are these people? What do they stand to gain from certain technological decisions? What values inform their work? What are the things they tend to overlook? These are still important questions to ask and wrestle with when we encounter excited pronouncements about some new program or gadget. We need to be wary of technologically deterministic narratives that treat certain technologies as inevitable, after all a sober analysis of many of the excited claims coming from “computer enthusiasts” makes it clear that many things touted as “inevitable” don’t show up, and when they do they look significantly different from the original promise. And though such narratives around inevitability generally have their roots amongst technologists, it is worth being mindful of the ways that much of the media further disseminates these viewpoints. After all, there are a lot of “computer enthusiasts” who aren’t technically computer professionals.

With his insistence that “there are actors,” Weizenbaum is also reminding us that we are all part of the story. That we all have choices to make, and that we all share in the responsibility. Nevertheless, Weizenbaum also reminds us not to get too taken in by the “dazzle” but to keep our eyes on the people trying to “dazzle” us. Or, as Weizenbaum put it in a piece titled “Social and Political Impact of the Long-term History of computing”:

“Guilt cannot be attributed to computers. But computers enable fantasies, many of them wonderful, but also those of people whose compulsion to play God overwhelms their ability to fathom the consequences of their attempt to turn their nightmares into reality.”

And while Weizenbaum was arguing that the attention should not solely focus on the computer, he was certainly not suggesting that we look away from the computer…

We still need to talk about computers

Despite the fact that Weizenbaum is closely associated with a particular program (ELIZA), the bulk of his critical work does not single out specific programs or companies. This is not to suggest that Weizenbaum was unaware of powerful institutions and their influence: he was quite critical of the Pentagon (and all it represented), and routinely had less than kind things to say about MIT (the university at which he was a professor). Nevertheless, something that makes Weizenbaum’s work quite distinctive from much contemporary critical work in and around computing technologies is how little time Weizenbaum spends talking about specific programs and specific companies. Indeed, in much current commentary on technology it is normal to see the focus on Meta (the company formerly known as Facebook), or on Tesla, or on ChatGPT, or on [reader: insert the name of the company/program/platform you most often think about here!]. It makes a fair amount of sense for commentary to narrowly focus in on a specific platform/company/individual; however, such a focus also carries a risk of placing all of the focus on that platform/company/individual while sparing from attention the underlying computer technologies.

In the aforementioned withering review of The Fifth Generation, Weizenbaum commented that:

“The computer has long been a solution looking for problems—the ultimate technological fix which insulates us from having to look at problems.”

As those words, from 1983, remind us, it isn’t particularly new to see the computer as the solution to every problem big and small. Yet, what Weizenbaum’s comment further gets at is the way in which the belief in technological solutions often distracts from a real analysis of the problem. After all, why try to really understand the complex nature of a problem if you can be certain that it will inevitably be fixed by a computer? To be clear, there can be no doubt that we live in a world with plenty of problems, very serious problems that desperately need to be addressed. But when computer (and computer adjacent) technologies are presented as the solution to every problem it presents a rather warped view of what the problems really are. The question of travel is clearly a real problem; however, answering this question with “self-driving cars” distracts from the problem’s roots in political and economic decisions with a one size fits all computerized fix. Furthermore, in the process of waiting for that computerized solution to arrive (and it rarely actually arrives), the problems often get worse while their underlying causes continue to be ignored. Nevertheless, the point that Weizenbaum gets at here is not simply a reminder that computer solutions are often presented to spare us from having to really confront difficult problems, but a reminder that we should not lose sight of the computer itself.

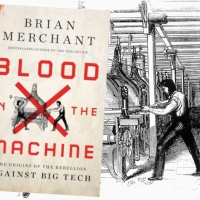

One of the things that can be somewhat disarming about revisiting Weizenbaum’s work today is the way in which his work consistently foregrounds the computer. At a moment when so much of the commentary on “tech” (positive and critical) focuses on specific platforms and companies, it can actually be a little bit surprising how rarely the conversation truly turns to the computer itself. In some respects, this serves to protect the computer itself from criticism even while opening up a space that allows for some more pointed critiques of certain applications of computing technology. While talk of a “tech lash” may largely be overblown, it is hard to deny that we have reached a point where you can publicly criticize Mark Zuckerberg without immediately being tarnished as a technophobic Luddite who really just wants everyone to go live in caves. Nonetheless, even as you can now get away with expressing distaste for Zuckerberg or Musk (and the companies with which they are associated), it is still a thornier proposition to criticize computing technologies themselves. You will be forgiven for saying that Zuckerberg and Musk are misapplying computer technologies and warping the power of these technologies for nefarious aims, but you will get yourself in a bit of trouble if you suggest that the problem is not simply about how Zuckerberg and Musk have used these technologies but about these technologies themselves. Critical commentators on technology know by now that technology isn’t neutral, but too often the unwillingness to consider that there might be something inherently worrisome about computer technologies suggests a lingering belief in the neutrality of computers. This creates a situation in which people can imagine that all that is needed is different CEOs, or different economic models, and then the computer will be able to be harnessed for good…but Weizenbaum warns against this tendency, emphasizing that it is not sufficient to see that the computer is not the solution to every problem, but for us to see that the computer (and the faith in it) is part of the problem.

Weizenbaum was highly critical of the computer, but he was also mindful of the reason why it had been so taken up beyond simply the realm of the technologists. For those in the policy world, for example, that the computer promised to solve every problem meant that they did not need to seriously tackle the more complex issues underlying those problems—thereby allowing these officials to pass responsibility off to the machines. And on the larger scale, as he put it in a lecture titled “The paradoxical role of the computer”:

“We have concluded a Faustian pact with our science and technology generally, and with the computer in particular. So it seems to me. And in such a pact with Mephistopheles both partners gain something: The devil the human soul and the human partner services which he much desires and finds good.”

And here Weizenbaum cuts to one of the biggest problems many of us confront when it comes to computer technologies, and one of the things that makes it easy to criticize this or that platform while making it far harder to go after computer technology itself: that many of us really do feel that we get much we desire and find good from these technologies. This observation on the “Faustian pact” is evocative of the concept from Weizenbaum’s friend and interlocutor Lewis Mumford, whose concept of the “megatechnic bribe” similarly points to the way that a promise of a share in the benefits often leads people to overlook technologies’ downsides. Yes, when it comes to computer technologies, we know that there are certainly tradeoffs involved, some of which are clearer than others, but in many cases people seem to conclude the tradeoffs are worth it. Or, by the time they decide the tradeoffs aren’t worth it, it is already too late.

Throughout his work Weizenbaum kept returning over and over to the question of the computer in society. Not this or that platform or company, the computer. And thus his work pushes us to do the same, to not only engage with the most visible manifestations of this or that program or company but to consider the underlying technology, and to force ourselves to critically evaluate the computers place in our society and in our world.

And if we are thinking about the computers place in our world, we have to think about what a computer should and should not do…

The Issue Isn’t Really About What Computers Can Do

From the outset of his book Computer Power and Human Reason, Weizenbaum stated the core point of his argument clearly, namely:

“there are certain tasks which computers ought not to be made to do, independent of whether computers can be made to do them.”

As he would go on to explain in the following chapters of the book, what had pushed him so firmly in this direction had been his experience with ELIZA. Not only in terms of seeing how many people had been fooled into believing that ELIZA actually understood them, but the reaction on the part of many (including some actual practicing psychiatrist) who imagined that a program like ELIZA would be able to replace human psychiatrists (at least in some contexts). This was an idea that greatly troubled Weizenbaum, and one that he loudly and consistently spoke out against. In designing ELIZA, Weizenbaum had modeled it in part on the way that a Rogerian psychoanalyst might interact with someone seeking therapy, but his goal wasn’t to replace such psychoanalysts. And this was because for Weizenbaum there was something about the relationship between a person and their therapist that was fundamentally about a meeting between two human beings. In language that was at times reminiscent of Martin Buber’s “I and Thou” formulation, Weizenbaum remained fixated on the importance of interaction between human beings. Granted, as Weizenbaum made clear over the rest of his book (and the rest of his work), he did not think that this matter of “ought” versus “can” applied strictly to psychiatric situations.

Oftentimes the discussions around computers (and AI) get quite caught up in what some new technology can do. And quite often the promise that a certain computer (or AI) can do something turn out to be rather exaggerated. Nevertheless, there can be little doubt that quite often computers (and AI) are able to do some interesting and impressive things. At the moment it is hard to get away from the mountains of AI generated images that people are eagerly posting everywhere, with a mixture of glee and mockery many people seem to be enjoying playing with ChatGPT, and every time you see a Tesla rolling down the street there’s possibly some small part of you that is checking to see if the driver has their hands on the wheel or if they are using autopilot. Of course, those are but a few examples. We could also think about the attempts to get everyone hooked into the Metaverse, to get everyone investing in cryptocurrencies and NFTs, and [reader: insert the high-tech hope you see getting talked about everywhere here!]. To be fair, there is a certain risk in flattening out the differences between all of these different computer related technologies as doing so erases serious considerations of their various risks and affordances. Nevertheless, for all of them one of the generally unasked but truly fundamental questions is not about if computers “can be made” to fulfill the particular goals of this or that project, but if computers “ought to be made” to pursue those goals.

Frankly, the “ought” versus the “can” has always been the vital question underneath our adventure with computer technology—and technology more broadly. Though it is a question that tends to be overlooked in favor of an attitude that focuses almost entirely on the “can” and imagines that if something “can” be done that it therefore should or must be done. But as Weizenbaum reminds us, technology isn’t driving these things, people are, people who are responsible for the choices they are making, and people who are so caught up in whether or not their new gadget or program “can” do something that they rarely stop to think whether it “ought” to do so.

In some of the recent debates around computers and society it seems that the matter of “ought” is starting to come to the surface more and more. Many people seem to be increasingly fervent in the belief that driving “ought” not to be entrusted to a computer program. Even as they gawk at the bizarre images churned out by AI image generators, many people seem to be of the opinion that the creation of art is best left to human artists. And even as excited proponents announce ChatGPT to be the end of the journalist, the writer, and the essay written for class—many look at the output of ChatGPT and observe that ChatGPT (much like the creators of ChatGPT) seems capable of generating an impressive façade of understanding that on closer analysis misses the point entirely. Of course, undergirding some of these reactions is a clear sense that some of these things are really just glorified plagiarism and imitation machines, that have gorged themselves (without compensation) on the works of artists and writers in order to spit something back out that seems vaguely different. And though it often isn’t directly framed in these terms, in many of these current debates what you can find is some version of this push and pull between “can” and “ought.”

When arguments of this sort start heating up, and the computer enthusiasts find themselves slightly on the defensive they have a tendency to react by decrying anyone even mildly questioning computing as a Luddite and a technophobe. Beyond insults, this often takes the form of asking: “who are you to say we can’t do this?” A fair question, but one which prompts a retort of: “who are you to decide you can do this?” After all, it seems quite obvious that the artists whose work has been gobbled up in order to spit out those AI generated images did not consent (and were not compensated); having made some of their work accessible online does not mean that every writer and academic agreed (or was compensated) for having their research and theorizing fed to ChatGPT; and though there are always risks to getting in a car or crossing the street, when you agreed to share the road you did not necessarily agree to venturing into a testing course for self-driving vehicles.

Central to Weizenbaum’s analysis of computing technologies was his clear sense (as far back as the 1960s) that the compuåter exists in society, that the computer impacts society, and that therefore those who will be impacted by the computer (all of us) should have some say in the matter. After all, the question of what tasks “ought not” be done by computers is clearly not one that can be left to the “computer enthusiasts.”

Questions to Ask…

At the end of his essay “Once more—A Computer Revolution” which appeared in the Bulletin of the Atomic Scientists in 1978, Weizenbaum concluded with a set of five questions. As he put it, these were the sorts of questions that “are almost never asked” when it comes to this or that new computer related development. These questions did not lend themselves to simple yes or no answers, but instead called for serious debate and introspection. Thus, in the spirit of that article, let us conclude this piece not with definitive answers, but with more questions for all of us to contemplate. Questions that were “almost never asked” in 1978, and which are still “almost never asked” in 2023. They are as follows:

“Who is the beneficiary of our much-advertised technological progress and who are its victims?

What limits ought we, the people generally and scientists and engineers particularly, to impose on the application of computation to human affairs?

What is the impact of the computer, not only on the economies of the world or on the war potential of nations, etc…but on the self-image of human beings and on human dignity?

What irreversible forces is our worship of high technology, symbolized most starkly by the computer, bringing into play?

Will our children be able to live with the world we are here and now constructing?”

As Weizenbaum put it “much depends on answers to these questions.”

Much still depends on answers to these questions.

Related Content

An Island of Reason in the Cyberstream – on the Life and Thought of Joseph Weizenbaum

The Lamp and the Lighthouse – Joseph Weizenbaum, Contextualizing the Critic

Against Technological Inevitability – on 20th Century Critics of Technology

Authoritarian and Democratic Technics, Revisited

Theses on Technological Optimism

Theses on Technological Pessimism

Pingback: From ELIZA to ChatGPT, our digital reflections present the risks of AI - isgotnews.com. All rights reserved.

Pingback: How the first chatbot predicted the dangers of AI more than 50 years ago - Adolfo Eliazàt - Artificial Intelligence - AI News

Great article, including Weizenbaum’s five questions at the end. There’s a lot of, if not gullibility, at a minimum, willful suspension of disbelief on this issue, along with the marketing by the tech world, per a Cory Doctorow.

Great one

Pingback: From ELIZA to ChatGPT, our digital reflections show the dangers of AI – Vox.com – Play With Chat GTP

Pingback: Media Archaeology Lab: Lab Notes 2/6/24 – Becca Ricks